The Displaced Plainsman directs his attention to a quick Axios report which links to a Center for New American Security report on the impact of artificial intelligence on economic, social, and military components of international security. The original report includes a heading that catches we two bloggers’ attention: The End of Truth… under which heading the report authors contend that the forgeries, fake news, and strategic propaganda artificial intelligence facilitates could make it impossible for us to sort out truth from malevolent fiction:

AI technology could weaken, if not end, recorded evidence’s ability to serve as proof. Some technologies, such as blockchain, may make it possible to authenticate the provenance of video and audio files. These technologies may not mature quickly enough, though. They could also prove too unwieldy to be used in many settings, or simply may not be enough to counteract humans’ cognitive susceptibility toward “seeing is believing.” The result could be the “end of truth,” where people revert to ever more tribalistic and factionalized news sources, each presenting or perceiving their own version of reality.

AI-enabled forgeries are becoming possible at the same time that the world is grappling with renewed challenges of fake news and strategic propaganda. During the 2016 U.S. presidential election, for example, hundreds of millions of Americans were exposed to fake news. The Computational Propaganda Project at Oxford University found that during the election, “professional news content and junk news were shared in a one-to-one ratio, meaning that the amount of junk news shared on Twitter was the same as that of professional news.”79 A common set of facts and a shared understanding of reality are essential to productive democratic discourse. The simultaneous rise of AI forgery technologies, fake news, and resurgent strategic propaganda poses an immense challenge to democratic governance [Michael Horowitz et al., “Artificial Intelligence and International Security,” Center for a New American Security, 2018.07.10].

I should note that “quick Axios report” is redundant: everything Axios posts is quick. I would suggest another threat to our grasp on truth in public discourse is our dwindling attention, to which Axios responds with its new Reader’s Digest which considers 520 words to be “going deeper.”

Defeating the “deep fakes” the authors foresee will take deep reading, for which the report authors and Axios‘s brevity suggest we as a society are not prepared. One tiny drop in that bucket of preparation for and resistance to robopropaganda is my rejection of anonymity. With at least one hostile power interfering in our elections with fake Twitter accounts, I have an obligation to put my name to my content, regularly assert my authenticity, and face the public consequences of getting any facts wrong.

Of course, asserting authenticity is meaningless if I don’t also practice authenticity—i.e., always tell the truth. While I will continue to offer great gobs of homegrown analysis, commentary, argumentation, speculation, and occasional bits of satire, I will also continue to offer factual information, to tell you where I get those facts, and to provide hyperlinks so you can check those facts yourself.

In the comment section, the least I can do is maintain my moderation filter, sending any new name or e-mail to the queue for my review and inquiry of the commenter’s authenticity. Comments remain in limbo until commenters respond with their names and demonstrate that minimal level of mutual trust and authenticity—I know you, you know me, let’s talk. Otherwise, it’s not a far step to imagine the Russian fakers upgrading from Twitter bots to blog-swampers sowing misinformation and discord with disruptive, off-topic propaganda.

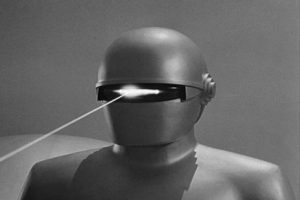

Hard-wiring Asimov’s Three Laws of Robotics, with a supplemental corollary about truth, into every device using artificial intelligence might help. Until the programmers catch up, I’ll keep speaking clearly by name, so you know I’m Kilroy, not Mr. Roboto, and I’ll ask my sources and commenters to do the same… because who wants to spend all day talking to a machine?

I like Axios. They tend to report on what’s important for the day and afterwards, if you have time, you can look elsewhere for more in-depth coverage. Axios is great for the week day, Pro Publica for the weekend.

Oh, I agree, Ben. Axios is a good new news source. Plus, I like your characterization of the niche they fill: Axios for quick reads, ProPublica for depth. Outlets like Axios can play a role in defending truth from deep fakes by providing consistent, reliable, easy-to-read news. But the full war for truth will require lots of in-depth reporting and in-depth reading.

In related news, Twitter’s purge of fake accounts has removed 340K “followers” from Trump’s Twitter, 2M from Kim Kardashian’s, and 3M from Obama’s.

Speaking of artificial intelligence, trump will have something to talk about to Putin, besides the high fives of destroying America.

“WASHINGTON (Reuters) – A federal grand jury on Friday indicted 12 Russian military intelligence officers on charges of hacking the computer networks of 2016 Democratic presidential candidate Hillary Clinton and the Democratic Party, the Justice Department said.” https://www.aol.com/article/news/2018/07/13/us-grand-jury-indicts-12-russian-spies-in-2016-election-hacking/23481528/

Russian military…Sounds like war to me, just as well get it over with, sooner than later.

Cory, I recall that you wanted to make sure I was a real person before posting my comments, so we met and chatted at Dr. Weiland’s place way back when.

When OS started using the Russian bot theme #WalkAway, it struck me that he may well be a bot, but your reminder that you humanized commenters suggests that he is just a tool of a Russian bot rather than a stand alone bot. Am I missing anything here?

And Porter frequently states that his research indicates some commenters use different names on their comments, but are really the same person. Is this possible under your screening methods? Do you permit someone you know to be a real person to use a variety of different names for their comments?

Bear, thanks for that conversation! As you know, if commenters and sources trust me with some personal contact, I otherwise protect identities. That’s to my burden of reliability: I’m vouching for my material and for the real, unique identity of my commenters.

Individuals can be duped by Russian bots. Individuals need to look carefully at where their information is coming from and make sure they aren’t adopting deceptive memes injected into our culture by foreign agents or others who may be acting in bad faith.

I do not permit the use of multiple simultaneous identities. I have rarely allowed individuals to change their regular handle, but I do not want one person to engage in any conversation using two different handles. Sockpuppets are forbidden.

Thanks Cory. your resonse helps clear up some potential misconceptions about some of the stranger comments that I have seen posted on your blog.

Cory. If a commenter has multiple internet connections under different names how do you know different user names aren’t the same person? Internet providers don’t require a drivers license when you buy their service. I can have another line run into my house under the name Abe Lincoln because someone named Abe Lincoln rents a room in my basement and the bill arrives every month addressed to Abe Lincoln. Likewise, a business account can have several internet connections with several internet addresses.

On July 27, 2016, Russian agent trump orders Russian Intelligence to go after Clinton emails, from today’s indictments of the Russian military Intelligence conspirators.

Here is what the Russian agent said on July 16, 2016:

“Russia, if you’re listening, I hope you’re able to find the 30,000 emails that are missing, I think you will probably be rewarded mightily by our press,” the Republican presidential nominee told reporters.” A clear call to arms from on Russian asset to his handlers.

And here is part of the indictment of the 12 Russian military Intelligence conspirators”

” on or about July 27, 2016, the Conspirators attempted after hours to spearphish for the first time email accounts at a domain hosted by a third-party provider and used by Clinton’s personal office. At or around the same time, they also targeted seventy-six email addresses at the domain for the Clinton Campaign.”

Russian military intelligence was listening to their Russian agent, trump. Collusion much? Indeed.

To reiterate my claim that there is at least one person on DFB that posts under different names and no doubt uses different internet addresses, is a segment from Jerry’s post, above. “…they also targeted seventy-six email addresses at the domain for the Clinton Campaign.” If Hillary could have 26 internet addresses it’s highly plausible that a commenter could have three or four through a business account.

The Russians even set up opposing factions to go to the streets against one another, because we refuse to believe we could be so damn dumb and lacking even artificial intelligence. We got sold out by a whole lot of republican traitors to Democracy. Did you all see the FBI dude strike back yesterday? Damn, that was good, he opened a big ol can of whop ass to give those traitors. Trey Gowdy looked like a man forced to eat Kimchi for a week.

For the record Porter, ol’ jerry rides alone with only one address.

Jerry … One is all we need, huh? I’ve said that it doesn’t matter what you call yourself on DFP, it matters what you say. My personal exception to that rule is when someone hides their real name and condemns the Islamic religion and it’s followers. Cowardly bigots deserve no quarter!

Indeed, I am good with all of that. I just like to use jerry as it seems appropriate for a number of reasons. Also, I just don’t need the hassle.

So, how do you know that someone is using more than one handle here?

It’s an investigative process using phraseology and an app. And, I know an IT guy that can do magic with a computer. But, we’re off topic.

Ted Kaczynski, the Unabomber, was tracked down by the FBI in this way. Certain areas of South Dakota have a kind of phrase that sticks out for their area. Thanks.

Exactly. Writing patterns are as personally distinctive as fingerprints. Law enforcement has lots of apps to narrow down a source. A teacher who reads papers by a limited group of maybe 25 students, day after day, can tell who’s writing what, even without a name. Here’s a quick example. Last week someone named anonymous posted on Powers blog that “Lance Russell is a handsome devil.” Now, who do you thing anonymous really was. ha ha ha

Indeed we need to make truth important. Winning means lying by this president, his party and his co-produced news agencies. In 1974 HRC helped nail Nixon. A generation produced the Pentagon Papers and ended a war. They’ve been lying ever since. These indictments are BIG.

We can unite as a Democratic Party and beat these lying b*stards. They will stir up dissension at every step. Un-united, we will tear ourselves apart. That’s the only way we can win. VOTE VOTE VOTE

Another area where Russian bots were used to attack Democrats was using Bernie Sanders sites to attack Hillary. Not only were republicans going after Hillary full force, but so were the bots and Bernie supporters.

republicans and Democrats for days and weeks circulated propaganda about Hillary’s emails, uranium one and what a hateful evil woman she was. All that Hillary hate effectively split the Democratic Party and handed the election to Putin.

republicans will continue the attacks on Hillary in our mid-terms and 2020 and will include Nancy Pelosi.

Hillary Clinton won by 3 million popular votes. That is always something to remember.

Roger, look at it this way. Mueller just indicted the Russian government. No witch hunt, just traitors working for Putin.

Have you heard any plaintive wails from your congressweasels decrying Drumpf’s upcoming love fest with the leader of a nation we just indicted 12 military intel operatives?

Reckon if they did, it would be under their breath so no word gets back to Drumpf because he would place trillions of dollars of tariffs on South Dakota to teach uppity pols a lesson.

Michigan farmers are lamenting the fact they voted for Drumpf and he has abandoned them. What a freaking surprise. This stuff happens when mainstream media ignore all of Drumpf’s shortcomings to concentrate on wingnut dog whistles about all the crimes wingnuts wish HRC had committed.

Let’s try this- hardwiring Jim Wright- Stone Kettle Station into wingnut brains and let him work his magic.

Wright is a Drilling and as such lets Drumpf have all three barrels

http://www.stonekettle.com/2018/07/folly-vice-and-madness.html

I’ll grant you this, Porter: I am not equipped to counter a full-scale cyberattack by the Russian military or other hostile powers. I take the nominal security measures outlined above and then place my faith in my fellow citizens to behave, just as I do when I ride my bicycle down the streets of Aberdeen armed only with my tire pump and my Swiss Army knife.

Russians behave better than the roving anti-Muslim hate groups in Aberdeen and across the state. One member of this crowd has four identities and I’ll assume three internet connections since he/she posts on your blog as Miranda – Lynn and Jason. Speech is free but hate speech isn’t. It needs to be exposed when it uses three identities to post similar bigotry and give the appearance that it’s more popular than it is.

Porter, I don’t publicize information about my commenters’ identities. However, I maintain that your allegation of sockpuppetry is false.